|

|

| NVIDIA GeForceFX: Brute Force Attack Against the King |

|

After NVIDIA introduced its new GeForceFX GPU at Comdex in Las

Vegas in November, anticipation levels were very high. However, the company was

not able to live up to its announced intention to release first samples as early

as December. So it's all the more astonishing to see the hectic pace at which

NVIDIA has finally launched its cards. We were given just about three days to

test the card - not enough time to test all aspects of the card in full detail,

but enough to bring you an extensive overview of the chip's performance.

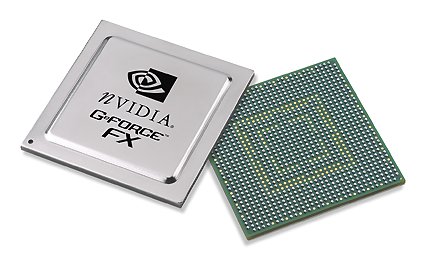

The GeForceFX GPU We gave you full details on the features of the GeForceFX GPU in November ( GeForceFX: NVIDIA goes Hollywood?). Here, we briefly recap the overview: Just like the ATI Radeon 9700, the GeForceFX is a DirectX 9 card. Through its new Floating Point, the new version of Microsoft's 3D API allows for better precision than with DirectX 8, and this includes significantly improved precision with effects. The extent of pixel and vertex shader programs has also increased. Now, loops are also possible within the shader program.

Not content with only DirectX 9, NVIDIA goes much further. GeForceFX can process longer and more complex shader programs, thereby allowing for more complicated effects (see: GeForceFX: NVIDIA goes Hollywood?). In order to create these shaders, NVIDIA released the CG Compiler. Because there are currently no games in sight that can take full advantage of all of DirectX 9's capabilities, it's difficult to tell what the user gets from all this expandable programmability. But it's safe to say that it doesn't do any harm. The GeForceFX chip is the first consumer-level graphics chip to be manufactured in the 0.13µ process. This lets NVIDIA clock the chip, which is produced in a flip-chip design, to a breathtaking 500 MHz. With 125 million transistors, the GeForceFX GPU is more than twice the size of a Pentium 4 CPU (55 million transistors). High power consumption aside (just like ATI's R300 cards R9700 and R9500 the GeForceFX requires an additional power supply), this generates a considerable amount of heat and therefore requires extensive cooling.

Compared to the GeForce4 Ti, the number of pixel pipes has been increased from four to eight. This allows the FX to process eight pixels per clock cycle with a texture. However, the GeForce4 GPU could calculate two textures per pipe, while the FX pipes can only process one. With multitexturing, the advantage is again reduced. NVIDIA also re-worked the filtering. The performance of the FX in tri-linear and anisotropic filtering modes has increased considerably. The chip now decides internally which filter level to use. Anti-aliasing has also been optimized and is now called "Intellisample" with the FX. Newcomers in the FX are anti-aliasing modes 6X and 8X under Direct 3D.

DDR-II memory is completely new to graphics cards, and the GeForceFX GPU was designed for it. The new memory modules allow for much faster data rates than DDR modules have offered up to now. With DDR-II, as with DDR, data is transferred on both flanks of the signals, which means two transfers per clock cycle and not four, as you might expect. The difference lies in the structure of the memory cells - instead of transferring in bursts of 2, DDR-II internally transfers in bursts of four. This allows it to run the RAM at significantly higher clock speeds, because the clock rates within the memory module have been halved compared to DDR. With DDR-II, the data is doubled within the memory cell and not during the transfer. However, the GPU has to be adapted to this, since data is now transferred in bursts of four instead of two, as was the case with DDR. The memory bandwidth of the GeForceFX is therefore calculated exactly as it is with the usual DDR memory: 128-bits / 8 bits/byte * 500 Mhz * 2 (2 transfers/clock) = 16.0 GB/sec The memory bandwidth, however, is a point of criticism with the GeForceFX. 16 GB/s is much less than the 19.8 GB/s from its competitor, the ATI Radeon 9700 PRO. ATI utilizes typical DDR memory with moderate clock speeds, and instead increases the bus width from 128-bit to 256-bit in order to raise the bandwidth. NVIDIA leaves it at 128-bit and clocks the memory faster, using DDR-II, of course.

In order to compensate for the apparent disadvantage with memory bandwidth, NVIDIA equipped the chip with Color Compression, in addition to Z-Compression. This enables loss-free compression of color data with a factor of 4:1 in real time. According to NVIDIA, this compression of color data is very effective, since even the color data on the polygon edges can be perfectly compressed. A last new feature to mention is AGP 8x, which, despite its ability to double data transferred from the CPU to the card, does not have much of an impact in practice. However, games to come, which are designed to take advantage of this greater bandwidth, should definitely give gamers the extra power they would expect from AGP 8x. An overview of the chip:

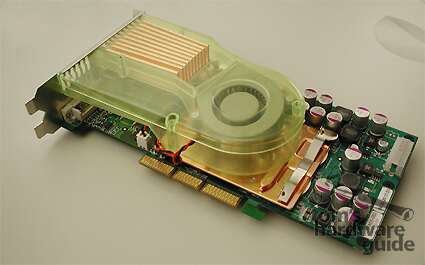

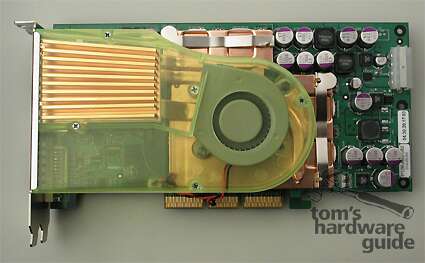

The Card With the GeForceFX, the look of graphics cards has been re-defined. This reference card is a real monster! Through its large cooler, the card weighs in at 610 grams/1.32 lbs - that's about three times the weight of a GeForce4 Ti4800 or a Radeon 9700 PRO (approx. 200 grams/0.49 lbs). But more about the cooler later.

The GeForceFX (NV30) is being introduced in two formats. Apart from the card that we look at in this review, the GeForceFX 5800 Ultra (500 MHz core, 1000 MHz DDR-II), there will also be a "normal" FX 5800 that will run at slower clock speeds (400 Mhz core, 800 MHz DDR-II). Some time later, NVIDIA will launch the N31 and NV34 variants, the details of which are not yet known.

The chip runs on an extravagant 12-layer board. Rumors have

been going around that NVIDIA will only have complete cards available to card

manufacturers and not, as is typical, the individual components - these rumors

are incorrect. Although NVIDIA will be delivering a few complete cards in the

beginning, so that the manufacturers can quickly introduce cards to the market,

the usual kits (chips, memory, cooler) will also be made available. The

manufacturers are also free to choose the cooler, as long as it meets the

cooling requirements set down by NVIDIA.

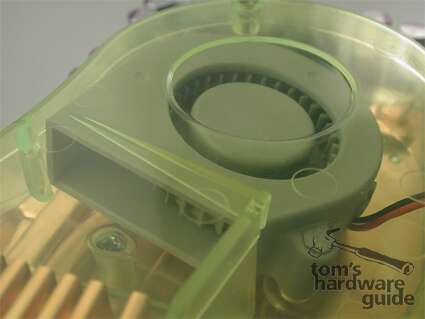

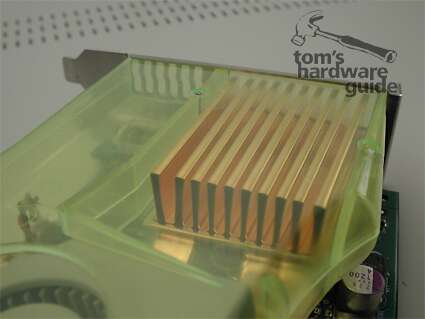

The Cooler

The GeForceFX 5800 Ultra produces a lot of heat. Hence, the appropriately extravagant cooler. Cool air is sucked in by a fan, through a channel outside the PC case, and another fan conducts the warm air out of the case. A heatpipe dissipates the heat from the chip directly to the cooling fins, which lie in the flow of air leading out of the case. This takes up quite a bit of space, causing the card's dimensions to increase, with the consequence that the first PCI slot of the mainboard is completely covered by it. Even the back of the card is equipped with a large passive heatsink to ensure proper cooling. Despite this complex cooling solution, the card becomes quite hot during operation. While testing the card in an open environment (i.e., outside of a PC case), the heatsink on the back of the card reached over 68° Celsius/ 154.4 Farenheit. In a closed PC case, the temperature should increase even more.

A further problem is the noise level. The fan produces an incredible racket on par with a vacuum cleaner - there's simply no other way to describe it. You can hear the card even if you're in another room of the house.

The GeForceFX 5800 Ultra recognizes three different operating modes. In 2D Desktop Mode, the chip and memory are clocked at 300/ 600 MHz. Here, the fan runs at a limited rpm and makes less noise. When you start up a 3D application (3D Performance Mode), the clock speeds increase to 500/ 1000 MHz, and the fan runs at maximum rpm. A third mode, Intermediate Mode, has not been integrated into the driver yet. This mode is meant to relieve the PC's power supply unit as well as the user's eardrums. NVIDIA strongly recommends using a PSU of at least 300W!

When we pointed out the immense noise generated by the card, NVIDIA responded by assigning this to the prototype status of the card. In the final design, the noise level is supposed to be reduced considerably. In its current state, the noise produced by the card is really unbearable. However, the noise is due to the fan, which has a very high rotation speed, and it's difficult to imagine how the noise level could be reduced to a level that could be considered acceptable.

To give you an idea of how loud this test card is, we've recorded the noise with a microphone and made it available as an MP3 file. As a point of comparison, we've also recorded an ATI Radeon 9700 PRO at an identical recording level and microphone position. The CPU cooler we used here is an Alpha PAL 8045 Silent - the noise generated by its fan is negligible and plays no role in this recording. This is what you hear in the MP3 file:

Naturally, the noise level in this recording depends on the playback volume. A good approach for the correct sound volume is the beep of your PC case when it powers on (with an open case). When you compare these recordings, it becomes clear why NVIDIA simply must continue to work on reducing the noise level, as they have promised. It's still a puzzle as to how this is going to happen. Even the noise volume in 2D Desktop Mode would be considered too loud. The Performance Let's get down to the tests. Because there are no games on the market yet that can make use of DirectX9 capabilities, we're left focusing on DirectX8/ 8.1 games. We put together an extensive repertoire of benchmarks and games in order to make a comparison between the FX 5800, the GeForce4 Ti4800 and the ATI Radeon 9700 PRO. In addition to the simple standard tests, we've also tested the performance in anti-aliasing and anisotropic filtering, as well as a combination of the two. Our test platform consisted of an ASUS A7N8X Deluxe motherboard (nForce2) and an Athlon XP 2700+ with 512 MB RAM under Windows XP.

Gaming Benchmarks Unreal Tournament 2003 Flyby

In Unreal Tournament 2003 (Flyby Test), the GeForceFX 5800 Ultra is clearly ahead of the Radeon 9700 PRO. The GeForce4 Ti4800 falls considerably behind. Unreal Tournament 2003 Botmatch

In the Botmatch test, a heavy load is put on the CPU. However,

the graphics card also has more on its hands with additional objects (the

figures) and effects (gunfire, explosions). Here, the Radeon and the FX are

nearly neck-on-neck. It's only in 1600x1200 that the FX manages to pull ahead.

The above graphic was created with Utility UTBench v1.32. Further information about this program can be found here: Ben's Custom Cases. The identical settings were used at a resolution of 1280x1024. The result shows a progressive curve in the benchmark in Antalus Flyby. The curves were brought into a single image using an image editor. 3D Mark 2001 SE

The advantage of the GeForceFX in 3D Mark 2001 is relatively small, and falls below 1000 points in high resolution modes. Serious Sam 2 Z-Buffer Problems Just a few months ago, there was some discussion about a Z-Buffer problem with GeForce4 Ti cards in the game Serious Sam. With these cards, the game runs at 32-bit color depth only with a Z-Buffer size of 16-bit instead of 24-bit. This seems to be caused by the "ChoosePixelFormat" command, which is used by applications in order to determine the size of the Z-Buffer at the start. What happens is that the games then call up a 32-bit Z-Buffer, which the card cannot deliver, because 8 bits are already reserved for the stencil buffer. To remedy this, set the GeForce4 and GeForceFX to 16-bit, rather than 24-bit (without the 8-bit stencil). By contrast, the GeForce3 is set automatically to the correct or desired 24-bit. This error also does not occur with the ATI Radeon 9700 PRO, which sets itself to 24-bit Z-Buffering and 8-bit stencil. This hints at a problem in the GeForce4 and FX driver. At least Croteam points out an error in the NVIDIA driver. In numerous discussions about this topic on the Internet, some programmers have also confirmed an incorrect Z-Buffer assignment in their OpenGL applications when using the "ChoosePixelFormat" command. This results in Z-errors, caused by decreased precision. Here

are a few screen shots from Serious Sam 2 when run with the GeForceFX:

NVIDIA, in contrast to ATI, does not plan for any setting options for the Z-Buffer depth in the driver, which means that the only way to work around this problem is when the application provides this option. This is the case with Serious Sam 2. With the console command /gap_iDepthBits=24, you can force a Z-Buffer that the GeForce4 Ti and GeForceFX can display without a problem. This is an easy way to get rid of the display problems in SS2. It has been presumed that NVIDIA cards gain a performance advantage through the smaller Z-Buffer - this can be confirmed only in part. The benchmark results of the GeForceFX under 16-bit and 24-bit Z-Buffer reveal only a very slight difference. And there's still the disadvantage of the display error. By comparison, the GeForce4 Ti cards chalk up better scores with 16-bit Z-Buffer than with 24-bit. Apparently, the problem is restricted to OpenGL. In Direct 3D, the problem could not be seen. In games with the Q3 engine, this problem did not occur either, since here a 24-bit Z-Buffer is called for, which the cards can then deliver. According to NVIDIA, the problem lies in that Serious Sam does not define the Z-Buffer precisely: "Serious Sam does not specify a Z-buffer depth when enabling Z. By default, our driver will select the first Z-depth available, which is 16 bits. For the majority of the scenes in Serious Sam, 16 bit Z is sufficient. There are, however, a few areas where some Z artifacts can be seen. You can force Serious Sam to specify 24-bit Z by doing the following: (type "~" to bring the console) In this mode, the Z artifacts are eliminated (or greatly reduced). We have seen little, if any, impact on performance using 24-bit Z. We have already addressed this with Croteam, and this issue is fixed in the upcoming patch, but the patch is only BETA right now." This contradicts the findings of programmers in the Web forums - they report that the problem occurs when a 32-bit Z-Buffer is called via "ChoosePixelFormat". Instead of choosing 24-bit mode, which would have made sense, the NVIDIA driver selects 16-bit mode, according to the reports. The problem could only be solved through a "Force 24-bit Z" option in the OpenGL driver, which ATI offers in its Radeon drivers, for example.

In Serious Sam 2, the GeForceFX 5800 Ultra is clearly superior to the Radeon 9700 PRO. The surprising aspect is how well the GeForce4 Ti4800 performs against the ATI cards. Its advantage becomes all too obvious - the GF4 uses reduced Z-Buffer precision (16-bit), which is standard for NVIDIA cards when used with games. In the tests, the performance of the Radeon 9700 PRO in 16-bit proved to be better than with 24-bit. Aquanox

Aquanox makes heavy use of pixel and vertex shaders in the DirectX 8 standard. Here, the GeForceFX chalks up full points. Jedi Knight II

In the OpenGL game Jedi Knight II, based on the Quake 3 engine, card performance is restricted, first and foremost, by the CPU - when the card itself is fast enough, that is. This is the case with the Radeon 9700 PRO and the GeForceFX 5800 Ultra. Note that the ATI boards have a somewhat lower performance level, which suggests that the drivers are less optimized for this game engine. Max Payne

In Max Payne, the GeForceFX scores against the Radeon once more. Here, the GeForce4 Ti4800 is clearly beat. Comanche 4

Performance in Comanche 4 also depends heavily on the CPU. This time, however, the ATI Radeon 9700 PRO manages to assert itself. Codecreatures

The Codecreatures Benchmark is a demo for new game engines that

make strong use of pixel and vertex shaders and therefore have very high

resolution textures. The GeForceFX clearly wins with its average frame rate

scores. By contrast, the Radeon scores with its average polygon count (avg

MPolys/s) results.

Conclusion: Standard Gaming Benchmarks In the standard tests (without FSAA and anisotropic filtering) the GeForceFX comes out on top. As resolution increases, however, its lead decreases. 3D Application Benchmarks Spec Viewperf 7

Viewperf tests the performance of cards with 3D workstation programs. An exact description of the individual results can be found here: SPECviewperf 7 Overview Quality Benchmarks 4x FSAA Unreal Tournament 2003

At lower resolutions, the GeForceFX is ahead, but at higher resolutions it loses its lead. At 1600x1200, the Radeon is slightly ahead. Serious Sam 2

The situation in Serious Sam is similar. Here, however, the Radeon manages to achieve a clear lead ahead of the GeForceFX at 1600x1200. The GeForce4 Ti4800 falls far behind. 8x Anisotopic Unreal Tournament 2003

With Unreal Tournament, the GeForceFX has a firm lead in anistropic filtering (medium setting - Performance Balanced). Serious Sam 2

In Serious Sam, both cards start off about the same, but later, the Radeon 9700 PRO manages to push ahead. 4x FSAA & 8x Anisotopic Unreal Tournament 2003

At maximum quality, the GeForceFX also remains in control, although its lead is only slight. Serious Sam 2

In Serious Sam under OpenGL, scores favor the Radeon 9700 PRO

once more.

Conclusion: Quality Test The result shows a mixed picture. Under Direct 3D in Unreal Tournament, the GeForceFX is slightly ahead most of the time. In Serious Sam 2, however, the Radeon 9700 Pro is most frequently in the lead. This gives the impression that the GeForceFX 5800 Ultra suffers a bit from its smaller memory bandwidth. Theoretical Benchmarks Overdraw - Villagemark

Villagemark was used by PowerVR to show the advantages of tiled rendering architecture. With this type of 3D chip, objects that are covered by other triangles are not rendered, thereby saving on bandwidth. Both NVIDIA and ATI have built similar mechanisms in their 3D chips. Villagemark is a good test of how effective overdraw prevention is in the cards. At 1024x768, Villagemark unfortunately gave an incorrect result for the GeForceFX - 0 fps. It would have been all too obvious if this result had been correct. Vertex Shader - Matrox Sharkmark

Matrox Sharkmark tests the performance of vertex shaders. Here,

the ATI Radeon 9700 Pro manages to beat the FX - and this is also confirmed by

3D Mark 2001. More details on that below.

3D Mark 2001: Individual Tests Nature

This test makes heavy use of DirectX 8 pixel shader effects. Fillrate Single Texturing

The result here is very surprising. The single-texture fillrate of the GeForceFX should have been much higher because of the high clock speed of the chip.

In multitexturing, however, the FX takes full advantage of its higher clock speed. Yet the FX is still further from its theoretical maximum (4000 Mtexel/s) than the Radeon (2600 Mtexel/s).

In this test, the FX clearly puts some distance between itself and the Radeon. Vertex Shader Speed

Here, the Radeon 9700 PRO is a nose ahead, as was indicated in Sharkmark.

In the pixel shader test, the GeForce scores the points, as expected, due to the high clock speed of the chip. Conclusion

NVIDIA takes the crown! No question about it - the GeForceFX 5800 Ultra is faster than the competition from ATI's Radeon 9700 PRO in the majority of the benchmarks. However, its lead is only slight, especially compared to the distance that ATI put between its Radeon 9700 PRO and the Ti 4600. Still, when compared to its predecessor, the GeForce4 Ti, the FX represents a giant step forward. The GeForceFX 5800 Ultra is irrefutably the fastest card in most of the tests - but at what price? The power of the FX relies on high clock speeds, which in turn require high voltages and produce an enormous amount of heat. The consequence is that extensive (and expensive) cooling is necessary. Add to that the DDR-II memory, the price of which is quite high, due to the small production numbers. Even the 12-layer board layout is complex and expensive. It will be difficult for NVIDIA to push its GeForceFX 5800 Ultra. Radeon 9700 PRO cards are only slightly slower, and, because they've been out on the market for months now, they're much less expensive. Also, because they deliver 3D performance with much slower clock speeds, they do not require extensive cooling - and that's nice for your pocketbook as well as your ears. Still, despite expectations to the contrary, the official price for the FX 5800 is $399 plus tax and that seems pretty aggressive and attractive. This makes it identical to the launch price of the GeForce4 Ti4600 and the ATI Radeon 9700 Pro. The "normal" version of the 5800 will be somewhat less expensive. It's surprising that the GeForceFX GPU, clocked at 500 MHz, only gains a small lead over the R300 GPU (VPU), which is modestly clocked at 325 MHz in comparison. It remains to be seen how long NVIDIA, with its FX 5800, can maintain a lead over ATI. ATI has already started to hint at a faster-clocked R350 to come in the next weeks (according to rumor, it will have a 400-425 MHz core and 800 MHz memory). Nevertheless, enthusiasts will, without a doubt, love the GeForceFX 5800 Ultra. It is a monster card! And it has a look that is similarly spectacular to the 3dfx Voodoo5 6000 at the time of its launch.

Top

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||