PC Graphics Beyond XBOX -

NVIDIA Introduces GeForce4

|

The New Year has started and we knew it wouldn't take long for NVIDIA to launch their next chip. NVIDIA's arch enemy ATi had just managed to catch up with GeForce3 technology, so it was time for the Santa Clara based 'GPU'-developer to make another leap forward.

NVIDIA is lucky that in 3D graphics the situation is different from what we have seen lately in the microprocessor arena. It has become increasingly difficult these days to justify the purchase of a new CPU, because processors that are even one year old are still very much up to their job. Nobody really needs Intel's or AMD's 2+ Gigahertz monsters.

In 3D, things are better. If you look at today's 3D graphics you will see that there is still a lot of room for improvement. Even though 3D-hardware is now able to display acceptable high-resolution 3D scenes at reasonable frame rates, we still could not possibly mistake them for movies of real people and objects.

That is why NVIDIA is able to crank 3D-'realism' up another notch with GeForce4 Ti today. We will see more impressive effects, more realistic-looking scenes and more life-like motions in the demos from NVIDIA's latest high-end product.

NVIDIA was also able to see that the majority of people are unwilling to pay huge sums for this new level of 3D-realism. Those people know that they can have fun even with games that are more than a year old. For those guys, NVIDIA is introducing GeForce4 MX, a product that is a derivative of GeForce2 and missing the funky features of GeForce3 or GeForce4 Ti.

The really high-end product line that NVIDIA is introducing

today with all the bells and whistles carries the name 'GeForce4 Ti'. The

flagship of this line was sent out to all the reviewers worldwide, as the

GeForce4 Ti4600:

Besides GeForce4 Ti4600, there will also be a GeForce4 Ti4400

and a GeForce4 Ti4200 to make the confusion perfect. In the following table you

see the basic specs of the new GeForce4 Ti card series, compared to NVIDIA's

former flagship product, the GeForce3 Ti500.

| GeForce4 Ti4600 | GeForce4 Ti4400 | GeForce4 Ti4200 | GeForce3 Ti500 | |

| Chip Clock | 300 MHz | 275 MHz | 225 MHz | 240 MHz |

| Memory Clock | 650 MHz (DDR) | 550 MHz | 500 MHz | 500 MHz |

| Amount of Memory | 128 MB | 128 MB | 128 MB | 64 MB |

| Memory Bandwidth | 10,400 MB/s | 8,800 MB/s | 8,000 MB/s | 8,000 MB/s |

| Theoretical Fill Rate | 1,200 Mpixel/s | 1,100 Mpixel/s | 900 Mpixel/s | 960 Mpixel/s |

| Price | $399 | $299 | $199 | $299 |

Before we get into the technology behind the GeForce4 Ti series, let's have a quick peep at the basic specs of NV25:

- 63 million transistors (only 3 million more than GeForce3)

- Manufactured in TSMC's .15 � process

- Chip clock 225 - 300 MHz

- Memory clock 500 - 650 MHz

- Memory bandwidth 8,000 - 10,400 MB/s

- TnL Performance of 75 - 100 million vertices/s

- 128 MB frame buffer by default

- nfiniteFX II engine

- Accuview Anti Aliasing

- Light Speed Memory Architecture II

- nView

The GeForce4 Ti cards will have the following specs:

- 8-layer design

- Usage of Samsung 4Mx32 high speed memory in micro-BGA package (2.8 ns for

4600)

- Video-out still requires 3rd-party chip

You will see in the benchmark discussion below that GeForce4

Ti4600 performs a considerable amount better than the already highly respected

GeForce3 Ti500. This impressive performance improvement of NV25 was not reached

through groundbreaking new technology, but rather through tweaking and

fine-tuning of technology that can already be found within GeForce3 (NV20).

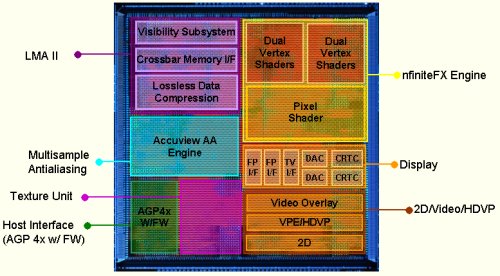

NV25's nfiniteFX II Vertex Shaders

If you remember what 'nfiniteFX' stood for in GeForce3, then you will certainly know what 'nfiniteFX II' is all about. NVIDIA chose this name for their programmable vertex and pixel shader engine that was first found in GeForce3.

In case you have no idea what 'vertex shader' stands for, I would like to ask you to please follow this link. You will find a very detailed explanation of a vertex shader and what it is good for.

Now, while GeForce3 had only one vertex shader, GeForce4 Ti comes equipped with two of them. Two vertex shaders in one chip aren't all new, since the NVIDIA chip that powers Microsoft's Xbox is also equipped with two vertex shaders. NV25 comes with an advanced version.

It is easy to imagine that two parallel vertex shaders can process many more vertices at the same time. The two units are multi threaded and the multi threading is done on-chip, to make the performance benefits transparent to the application or the API. Instruction dispatch is handled by NV25, but it has to confirm that each vertex shader is working on a different vertex to make the parallelism sensible. The vertex shaders were tuned from the original version found in GeForce3, thus cutting down instruction latencies.

In summary, you can say that GeForce4 Ti4600 is able to process

about 3 times as many vertices as GeForce3 Ti500; this is because it has twice

the amount of vertex shaders, they are more advanced and because they are

clocked higher.

NV25's nfiniteFX II Pixel Shaders

Please go here to learn more about pixel shaders.

NVIDIA was also able to improve the pixel shader functionality

of GeForce4 Ti.

The new chip supports pixel shaders 1.2 and 1.3, but not

ATi's 1.4 extension.

Here are the new pixel shader modes:

OFFSET_PROJECTIVE_TEXTURE_2D_NV

OFFSET_PROJECTIVE_TEXTURE_2D_SCALE_NV

OFFSET_PROJECTIVE_TEXTURE_RECTANGLE_NV

OFFSET_PROJECTIVE_TEXTURE_RECTANGLE_SCALE_NV

OFFSET_HILO_TEXTURE_2D_NV

OFFSET_HILO_TEXTURE_RECTANGLE_NV

OFFSET_HILO_PROJECTIVE_TEXTURE_2D_NV

OFFSET_HILO_PROJECTIVE_TEXTURE_RECTANGLE_NV

DEPENDENT_HILO_TEXTURE_2D_NV

DEPENDENT_RGB_TEXTURE_3D_NV

DEPENDENT_RGB_TEXTURE_CUBE_MAP_NV

DOT_PRODUCT_TEXTURE_1D_NV

DOT_PRODUCT_PASS_THROUGH_NV

DOT_PRODUCT_AFFINE_DEPTH_REPLACE_NV

Explaining each new mode would go too far, but I would like to mention GeForce4 Ti's new support of z-correct bump mapping, which fights the artifacts seen where a bump-mapped surface intersects with other geometry, such as the water of a lake or river where it touches land.

Another important improvement was made with the DXT1 compressed texture quality, as you will see in our screen shots.

Finally, NVIDIA tuned a number of pixel shader pipeline paths, which dramatically accelerated the rendering of scenes with 3 or 4 textures per pixel.

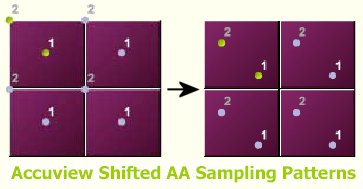

Accuview - Improved Anti Aliasing

When GeForce3 was released last year, NVIDIA introduced what it called 'HRAA' - high resolution anti aliasing, based on multi sampling AA. Please follow this link for more details.

GeForce4 comes with 'Accuview'. It is supposed to be an advanced multi sampling AA, improved in terms of quality as well as performance.

NVIDIA is using 'new sample positions' that are supposed to improve AA quality, because less error is accumulated, especially when using Quincunx AA. NVIDIA has a white paper about this procedure, but I suggest you save your time and do without it, because it doesn't really explain much anyway.

A new filtering technique that comes into play each time the (multi) samples are put together to produce the final anti aliased frame has been tweaked to save one complete frame buffer write, which results in a dramatic improvement of AA-performance.

Basically, Accuview is supposed to make AA look better and run faster. GeForce4 runs the NVIDIA-specific 'Quincunx'-AA just as fast as 2x-AA. Besides that, NVIDIA added another mode called '4xS'. This mode is supposed to look a lot better than 4x AA mode, due to a 50% increase in subpixel coverage. Unfortunately, this mode is only available for Direct3D games, not for OpenGL ones.

Finally, Accuview supports anisotropic filtering, to improve the look of textures that extend from the foreground into the background.

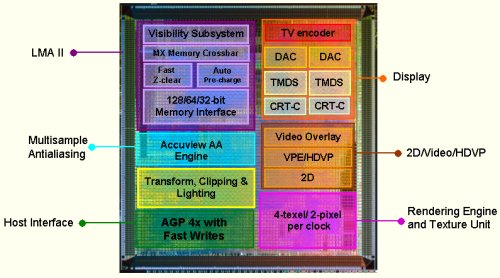

LMA II - The New Light Speed Memory Architecture

For me, the most important feature, and the one that's predominantly responsible for GeForce4 Ti's impressive performance leap over GeForce3, is the new improved 'LMA II'. Please follow this link to learn what 'LMA' stood for in GeForce3. It explains why a special memory controller makes so much sense in 3D-graphics. I will not explain this again here.

LMA II is GeForce3's LMA with each component tweaked, tuned and advanced.

- Crossbar Memory Controller

GeForce3 was already equipped with this feature, enabling it to access memory in 64-bit, 128-bit as well as the usual 256-bit chunks, significantly improving memory bandwidth usage. For LMA II, NVIDIA improved the load balancing algorithms for the different memory partitions and improved the priority scheme to make more efficient use of memory across the four partitions. - Visibility Subsystem - Z-Occlusion Culling

This feature was also found in GeForce3 already, but for NV25 it has been tuned to cull more pixels while using less memory bandwidth to do it. The culling is now done in a certain culling surface cache on-chip to avoid off-chip memory accesses. - Lossless Z-Buffer Compression

This is another feature that was included into GeForce3 already. However, in LMA II the 4:1 compression is supposed to be done successfully more often, due to a new compression algorithm. - Vertex Cache

The vertex cache stores vertices after they are sent across the AGP. It's used to make the AGP more efficient, by avoiding multiple transmissions of the same vertices (e.g. primitives that share edges). - Primitive Cache

Assembles vertices after processing (after vertex shader) into fundamental primitives to pass onto triangle setup. - Dual Texture Caches

These were already found in GeForce3. The new cache algorithms are advanced to 'look ahead' more efficiently in cases of multi texturing or higher quality filtering. This contributes to the significantly improved 3 and 4 texture performance of GeForce4 Ti. - Pixel Cache

This cache at the end of the rendering pipeline is a coalescing cache, which is very similar to the 'write combining' feature of Intel and AMD processors. It waits until a certain amount of pixels have been drawn until it writes them to memory in burst modes. - Auto Pre-charge

Memory banks need to be pre-charged before they can be read, adding a nasty clock penalty to every read in a new bank of memory. To avoid this waste of time, GeForce4 Ti is able to assign memory banks for pre-charge ahead of time, according to a certain prediction algorithm. - Fast Z-Clear

This feature has already been around for several years. It was used for the first time on ATI's Radeon chip. What it does is simply set a flag for a defined area of the frame buffer, so that, instead of filling the whole frame buffer area with zeros, only the flag has to be filled, saving memory bandwidth.

nView

All GeForce4 cards are equipped with dual 350 MHz RAMDACs as well as TDMS transmitters for dual CRT or flat panel solutions. On top of that, NVIDIA supplied a plethora of software features that enable you to do all kinds of things with your personal dual display setup. Getting into more detail of this feature would extend over the boundaries of this article.

'MX,' the code of NVIDIA's value chips has made a big jump. So

far, we only knew 'MX' from GeForce2 MX. There never was a GeForce3 MX, but now

NVIDIA is introducing the GeForce4 MX line. We were supplied with the flagship

of this line of value 3D-cards, the GeForce4 MX460:

Unfortunately, the 'GeForce4' name isn't very helpful and will cause a lot of confusion. While all GeForce3 cards sport the vertex shader feature ('nfiniteFX'), GeForce4 will not be so straightforward. The effect of this is that GeForce3 cards are actually far more advanced than GeForce4 MX cards! Be aware of that!

While GeForce4 Ti is the pinnacle of today's mainstream 3D chip

technology, GeForce4 MX is a mere advance of GeForce2 MX technology. GeForce4 MX

does not contain any nfiniteFX-engine and, therefore, no full DirectX 8.x

functionality, either.

Based on the GeForce2 MX design, GeForce4 MX has an acceptable background, even though it lacks the new vertex and pixel shaders. Fortunately, NVIDIA did several things to make Gefore4 MX more powerful than GeForce2 MX.

- Based on GeForce2 MX GPU

- Manufactured in TSMC's .15 � process

- Chip clock 250 - 300 MHz

- Memory clock 166 - 550 MHz

- Memory bandwidth 2,600 - 8,800 MB/s

- Accuview Anti Aliasing

- Light Speed Memory Architecture II, stripped down version of GeForce4 Ti

- nView

- VPE

The major advances that GeForce4 MX has over previous GeForce2 MX cards are, of course, the higher chip clock, and then the LMA II with the two-segmented crossbar memory controller (GeForce3 and GeForce4 Ti have 4 segments), which increases available memory bandwidth. Memory bandwidth, or, rather, lack thereof was what used to destroy the performance of previous GeForce2 MX cards. GeForce4 should do better. It remains questionable if the 'Accuview AA' feature is making an awful lot of sense. The specs don't seem to be powerful enough to ensure reasonable frame rates under AA. Let's look into that once we check the benchmarks.

These are the different flavors of GeForce4 MX:

| GeForce4 MX460 | GeForce4 MX440 | GeForce4 MX420 | GeForce2 MX400 | |

| Chip Clock | 300 MHz | 270 MHz | 250 MHz | 200 MHz |

| Memory Clock | 550 MHz (DDR) | 400 MHz | 166 MHz | 166 MHz |

| Amount of Memory | 64 MB | 64 MB | 64 MB | 64 MB |

| Memory Bandwidth | 8,800 MB/s | 6,400 MB/s | 2,656 MB/s | 2,656 MB/s |

| Theoretical Fill Rate | 600 Mpixel/s | 540 Mpixel/s | 500 Mpixel/s | 400 Mpixel/s |

| Price | $179 | $149 | $99 | $50 |

The cards come with those predominant features:

- 6-layer design

- Usage of 3.8 ns Samsung 4Mx32 high speed memory in micro-BGA package for GeForce4 MX460

VPE - NVIDIA Video Processing Engine

All GeForce4 MX cards are now finally equipped with a decent hardware support for DVD playback. The VPE white paper says that GeForce4 MX will support hardware motion compensation and iDCT, plus all the other hardware features needed for MPEG2-playing and recording. It's nice to finally see that happen, since ATi products have been equipped with this feature already for years.

GeForce4 MX has finally been equipped with a built-in video encoder for video-out. So far, the video output quality of NVIDIA cards was a real pain. The output quality of the new GeForce4 MX should be better, even though our tests did not show any major quality improvement. The output of a notebook S3 Savage IX chip was far better.

Right after I received the two cards, I had to test the video output quality of the GeForce4 MX460. To do this quickly, I installed it in my multi media PC system in my living room, which is hooked up to the stereo to play SWR3 web radio all day (which I can't live without), and hooked up to my video/TV, as well, to replay copied DVDs from out of my home network. This system comes equipped with an Asus A7V266-E motherboard with VIA's Apollo KT266A chipset, an AthlonXP 2000+ processor, lots of memory and other nifty things.

Once the 27.30-driver had been installed, my GeForce4 MX460 card would not start WindowsXP as long as video-out of the GeForce4 MX was connected to the TV. I rang Lars in Germany and he tried a similar configuration, an AMD760 platform with AthlonXP 1900+. In his case the system would not run as well, as soon as the video-out of the GeForce4 MX460 was hooked to a television.

It seems as if Athlon plus GeForce4 MX plus television is not a good constellation. NVIDIA was very surprised, but also not particularly fussed. While NVIDIA likes to point fingers at ATi's driver issues, it seems to consider itself infallible. The test guys who ought to have caught this driver bug seem to be busy selling their stock or counting their money instead. That's how times change! A few years ago somebody at NVIDIA would have been willing and able to fix a bug of this kind. Today, nada ...

| Hardware Socket 478 |

|

| CPU | Intel Pentium 4 2200MHz

MHz 400 MHz QDR FSB |

| Motherboard | ASUS P4T-E Intel i850 |

| Memory | 256MB 400MHz RDRAM (2x128MB) |

| Hard Disk | Seagate 12GB

ST313021A UDMA66 5400 U/min |

| Driver and Software | |

| NVIDIA Cards | v27.30 |

| ATI Cards | Alternate Driver - 6.13.10.6025 |

| DirectX Version | 8.1 |

| OS | Windows XP Professional |

| Benchmarks and Settings | |

| Quake3 | v1.17 OpenGL with HW Transformation Support (Demo001) |

| Aquanox | DirectX 8 Game |

| 3DMark 2001 | Synthetic DirectX 8 Benchmark |

In Giants, based on DirectX7, there's less of a difference in performance. The system environment seems to put a strong limit on performance. This is the only way to explain the relatively small performance difference between the GeForce4 Ti boards. Here, the MX440 is at the GeForce2 Ti level. The 460 does not quite reach the Ti200.

In this benchmark, the GeForce4 Ti4600 with 2XFSAA is at about the level of the GeForce3 Ti200 - but without the FSAA.

In Max Payne, a DirectX 8 game, the differences are significantly larger. Here, even the "smaller" GeForce4 Ti is ahead of the Ti500. At 1600x1200, the GeForce4 Ti4600 achieved double the frame rate as ATI's RADEON 8500! The MX460 was also surprising - this beat the GeForce3 Ti200 by a nose.

At 1024x768, the Ti4600 with the 2x FSAA activated is faster than the GeForce3 Ti500 without FSAA! Even at 1280x1024, the Ti4600 with 2X reaches the scores of the Ti500. In contrast, the performance loss of the GeForce4 MX460 with 2X FSAA is much more evident than with the Ti500 series.

The DirectX 8 3D engine from Aquanox uses pixel and vertex shaders. Nevertheless, the MX460 is able to remain at the Ti200 performance level, thanks to its fast GPU and memory clocks. The Ti500 is able to keep just a bit ahead of the RADEON 8500 in this discipline. Meanwhile, the GeForce4 Ti series demonstrate the power of their double vertex shaders in Aquanox. The 4600 runs twice as fast as the R8500 and Ti500.

In Quake 3, the MX460 positions itself between GeForce3 Ti500 and Ti200. In the meantime, the 440 clearly trounces the GeForce2 Ti. The GeForce4 Ti4200 is only slightly ahead of the RADEON 8500 and the GeForce3 Ti500. The larger of the series are able to get further up the ladder. Whether 136 fps at 1600x1200 is really necessary remains questionable. What's more impressive is the 70 fps of the 4600 with 2X FSAA, which is almost at the level of a GeForce3 Ti200.

3D Mark skews the picture somewhat. This synthetic benchmark lets the RADEON 8500 flex its muscles and make its way ahead of the GeForce3 Ti500. However, the GeForce4 Ti pulls ahead of the rest. Even the "smaller" 4200 is out of reach for the R8500. The MX460 is slightly below the performance of the GeForce3 Ti200. The 440 clearly beats the GeForce2 Ti.

In the FSAA arena, the GeForce4 Ti4600 delivers impressive results. With an activated 2x FSAA and Quincunx, it is almost neck and neck with the RADEON 8500 and Ti500 withouth FSAA. In Quincunx FSAA, the MX460 even beats the Ti500 and RADEON 8500! The RADEON 8500 lags behind the 460 in 4x as well.

With 2x and Quincunx, the GeForce4 Ti4600 almost reaches the normal level of the GeForce3. The RADEON 8500 clearly falls behind in 4x. The MX460 bravely stands its ground, positioned behind the Ti500 at a respectable distance.

As usual, NVIDIA introduces its new products with impressive technology demos. The following shows some screenshots from the CODECULT 3D engine.

Codecult CODECREATURES Demo

Codecult comes from German developers, under the aegis of Phenomenia. For the launch of the GeForce4, they present a demo that takes advantage of the capabilites of modern graphics cards.

The demo runs best with 128 MB cards, since 64 MB boards have to rely on the AGP. We asked the developers for their opinion on the new GeForce4.

THG: What do you think of the Vertex Shaders in general and the double Vertex Shaders of the GeForce4?

Kurt Pelzer, Senior Programmer: In principle, anything is useful which increases performance. Programmable Vertex Shaders in particular are a must for the future, and as no modifications to the existing code are required to support the performance advantage of the second VS, it is an ideal extension of the available architecture for any user.

THG: What's your view on NVIDIA's leaving out the (ATI) Pixel Shader of version 1.4?

Stefan Herget - Managing Director of Codecult: Thanks

to its extremely modular and flexible architecture, the CODECREATURES engine is

arbitrarily expandable and supports PS 1.4.

The game developer can

implement special PS effects, therefore it depends on his decision whether

version 1.4 or only 1.1 or 1.3 is supported. The library of prefabricated Shader

effects provided by us will first be optimized for the common hardware base, and

will thus use version 1.1 or 1.3, although the engine already supports 1.4. We

will later extend our effect library directly for version 2.0.

Do we really need FSAA or not? The opinions vary according to personal taste and types of games. But the edge-smoothing is nothing but an advantage. With MultiSampling, the textures can sometimes turn out a bit unclear.

ATI, with its SmoothVision, and NVIDIA, with its Accuview, take different approaches to FSAA, so it is difficult to make a fair comparison. For example, ATI lets you choose between Performance and Quality settings for each FSAA level, each of which affects the performance accordingly. In addition, ATI says that it filters more than NVIDIA, which therefore explains the performance difference in the FSAA benchmarks. But when comparing the results here, you get the impression that Accuview is simply better, which accounts for the gain in performance.

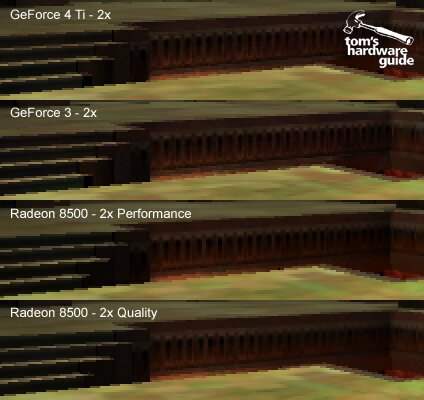

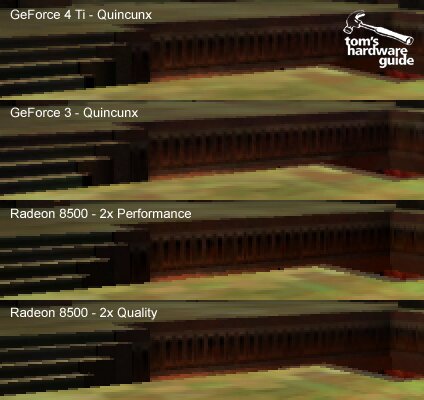

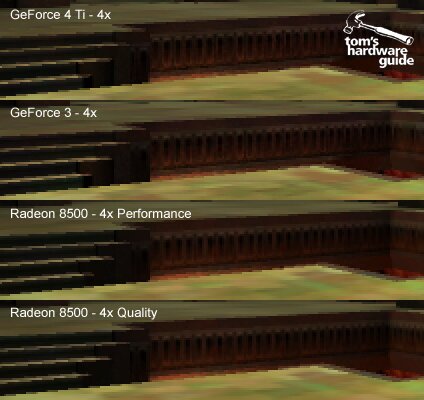

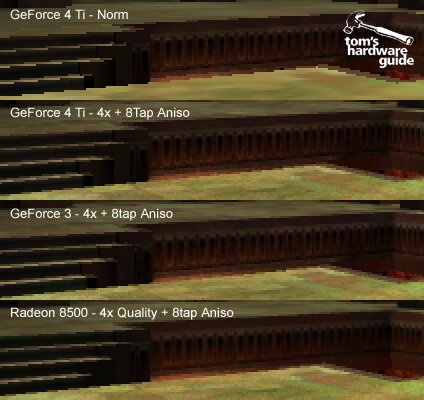

The following pictures compare the various FSAA img from GeForce4 Ti, GeForce3 and RADEON 8500. Each excerpt has been enlarged by 200%.

The img with all three cards are absolutely identical. The coarse, jagged edges are clearly noticeable on the diagonal lines.

The two NVIDIA cards provide almost identical results. With the R8500, you can clearly see that the smoothing of the edges in Performance mode is not as good.

NVIDIA's special Quincunx Mode smooths the edges significantly better than 2x, but it results in img that are noticeably less clear. It's hard to tell the difference between GeForce3 and GeForce4.

In 4x mode, the ground texture is a bit clearer with the NVIDIA cards than with the RADEON 8500.

The last mode shown does not exist in this form. Anisotropic filtering ensures clearer representation of textures that appear at a flat angle in relation to the viewer, such as the ground textures. However, these calculations are processor-intensive.

The difference compared to the standard image is huge. However, once again, it's hard to tell the difference between the individual cards.

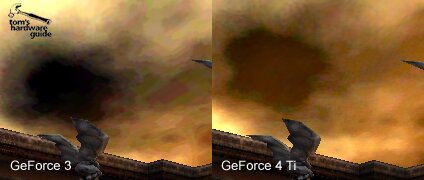

With GeForce4, NVIDIA solves a problem that has been criticized ever since the first generation GeForce: the poor rendering quality of DXTC1 texture compression. By using 16-bit color depth, the textures rendered are very coarse, as are the colors. The sky in Quake 3 is just one example.

NVIDIA finally eliminates this problem with the GeForce4.

The difference between GeForce3 (left) and GeForce4 (right) is huge.

Anisotropic filtering does enable a significantly better image quality, but at the same time, it gobbles up a consinderable amount of processing power.

The RADEON 8500 just manages to outclass the GeForce3 and 4. However, ATI uses a trick with the RADEON 8500. ATI's David Nalasco comments:

"The Radeon 8500 only uses the full number of samples in parts of the image where they are really needed, which saves bandwidth while still delivering full anisotropic image quality - just another example of the 'intelligent architecture' that saves bandwidth whenever possible."

So, the driver dynamically decides which textures should be anisotropically filtered. For example, if you stand in front of a wall, then there's no advantage to filtering this texture anisotropically. In the screenshots, you cannot see any loss in quality. Unfortunately, during a benchmark run of over 180 fps, it is difficult to see how much is really being filtered. Therefore, the result should be viewed with a certain amount of skeptism. Also, the small performance lead that the GeForce4 Ti4600 has over the GeForce3 Ti500 is disappointing.

NVIDIA blessed us with a whole lot of new products today and it's really not easy to keep the overview. Let's start with the 'little' GeForce4, the GeForce4 MX line of cards.

When you look at the three different GeForce4 MX offerings, it is easy to see that the low-end version, the GeForce4 MX420, will be a product for OEMs only. Its 166 MHz SDRAM memory will slow down the performance of the GeForce4 MX chip so much that it will hardly perform any better than previous GeForce2 MX400 cards that you can get for a mere 50 bucks.

The performance of the GeForce4 MX440 and 460 cards, however, proved to be very impressive. Especially the GeForce4 MX460, which is able to score results as high as those of the GeForce3 Ti200, or even the Ti500 cards. Both are able to beat ATi's Radeon 7500 in most cases.

Still, none of the GeForce4 MX cards has full DirectX 8.x support, because of its lack of vertex and pixel shaders. This weighs heavily when you remember the lowest priced offering out of the GeForce4 Ti bunch of cards. It's only $20 more than GeForce4 MX460.

I therefore suggest the GeForce4 MX440 for value buyers, but they should try to get the price down to less than $149. Better yet, a value buyer should watch out for GeForce3 cards. Any GeForce3 card that costs less than $190 is a bargain right now. GeForce3 comes with full DirectX 8.x functionality, vertex/pixel shaders and lots of 3D power! If you can chose between a GeForce3 card and a GeForce4 MX card for similar prices, I would clearly go for the GeForce3!

Now let's get to the GeForce4 Ti line of cards. Those cards resemble the excellence of today's 3D. Two vertex shaders and an extremely efficient memory subsystem speak for themselves. Each card holds its own in the benchmarks and each card is able to beat its predecessor with the GeForce3 label. This is impressive, indeed!

It doesn't really matter which GeForce4 Ti card you choose. You will always be a winner. If you can only spend $199, then take the GeForce4 Ti4200 and be merry, because it is still faster than the former performance leader GeForce3 Ti500. Speaking of which - I hope that no dealer still has these cards in stock. GeForce3 Ti500 can now only sell at price points of $149-179. This is a steep drop, considering its price as of yesterday, which was $299. Who would pay that when he can get a faster product for $100 less?

The GeForce4 Ti4400 is a good product, too. It's well ahead of GeForce3 Ti500 and its brother GeForce4 Ti4200, but for $299 it's still acceptably priced. Only the GeForce4 Ti4600 is for people who don't care about how much money they spend. It's for those who want simply the best. For a hefty $399, they'll get the best 3D card that money can buy right now.

Some moaners are criticizing GeForce4 Ti for its lack of really new technologies. I would like to remind those people of the performance jump we are seeing here. Isn't that what really counts? GeForce4 Ti has so much power, it can run with anti aliasing enabled in virtually any game right now. Features alone don't win customers -- they ought to make sense, and the performance has to be right, too. GeForce4 Ti seems to be a clear winner here.

What I'd like to rant about is the unwise idea to use the same name for the new top 3D performing chip as well as the technologically backward value product. The name 'GeForce4' has already lost its meaning on the day of its introduction. On the one hand it stands for top-notch 3D power, on the other hand it stands for old technology from times before GeForce3.

Last but not least, I'd commend NVIDIA on the decision to equip all their new cards with dual display capabilities. Two displays instead of only one can make your work more efficient indeed. We know, of course, that NVIDIA did not exactly invent this idea. This credit goes to Matrox. It's too bad that only GeForce4 MX was equipped with an integrated video encoder. I can only hope that NVIDIA will finally manage to bring its video output quality on par with S3 and ATi. So far NVIDIA has been down in the dumps as far as that feature is concerned.