FSMN Articles

Presentation to FSMN in August 2004 by Ray Tomes

The Wave Structure of Matter

Here is a quotation on

what de Broglie believed from A. P. Kirilyuk (1999) in a paper titled

“75 Years of Matter Wave: Louis de Broglie and Renaissance of the

Causally Complete Knowledge”.

ABSTRACT.

A physically real wave associated to any moving particle and

propagating in a surrounding

material medium was introduced by Louis de Broglie in a series of

short notes in 1923 and in

the most complete form in his thesis defended in Paris on the 25th

November 1924. This result, recognised

by the Nobel Prize in 1929, gave rise to the whole field of the ‘new

physics’ known today as ‘quantum mechanics’.

However, although such notions as ‘de Broglie

wavelength’ and ‘wave-particle duality’

have become ‘conventional’ in the standard quantum

theory, it actually only takes for granted (postulates)

the formula for the wavelength (similar to other its formally

postulated ‘results’) and totally ignores

the underlying causal, physically real and transparent picture of

quantum/wave behaviour outlined

by Louis de Broglie in his thesis and further considerably developed

in his later works, in the form

of ‘double solution’ and ‘hidden thermodynamics’

concepts (the first of them is only mechanistically

imitated within the over-simplified ‘interpretation’ of

‘Bohmian mechanics’, now often presented

as ‘causal de Broglie-Bohm theory’). The payment for such

crude deviation from the basic de Broglian

realism is the absolute domination of the fundamental science by

purely abstract, detached from reality

and mechanistically simplified schemes made of formal symbols and

rules that has inevitably led it into

the deep impasse judiciously described today as the ‘end of

science’. However, an independent approach

of the ‘quantum field mechanics’ (quant-ph/9902015,16,

gr-qc/9906077) created recently within

the ‘universal science of complexity’ and the related

‘dynamic redundance paradigm’ (physics/9806002)

leads to confirmation and natural completion of unreduced de

Broglie's results eliminating

all their ‘difficult points’ and reconstituting the

causally complete, totally adequate and naturally

unified picture of the objective reality directly extendible to all

higher levels of the complex world dynamics.”

A few years after de Broglie's insight into the wave nature of matter, Schroedinger was able to make his famous equation for the development of quantum fields, and again, he believed that his wave equation described real waves and not just probabilities as Bohr and others were to later interpret them. Certainly it cannot be argued that quantum mechanics often deals with probabilities, but Einstein's famous “God does not play dice” remark makes clear the belief that something real is happening underneath the probabilities and the nearer that we can get to that the better we can understand nature. Later it will be demonstrated that it is possible to go below this supposed probability limit.

Before going on to look at some aspects of realistic models of QM and related issues, it would first be helpful to cast aside once and forever some of the nonsense that is spouted in the name of science in connection with QM. Perhaps the part of quantum mechanics that is most often portrayed as impossible to comprehend and thoroughly quantumly weird in many popular books is the experiment variously known as the EPR experiment, the Aspect experiment or Bell's inequality. It is not only popular books that do this, many physicists believe something similar, but there are some such as Trevor Marshal who do not. Several people have made statistical arguments that show that Bell's inequality results from a proper understanding of conditional probabilities and these include David Elm and Caroline Thompson.

In the mid-1990s I came across David arguing with some physicists about these experiments in an internet group. He had a very simple realistic model that produced the same results as the EPR experiments by tossing coins. The physicists were arguing, not with his physics, but with his statistics. He was unable to get them to understand the difference between a sample (being in this case all the quantum emissions in a time interval) and a sub-sample (being all the events observed at both of two locations). The biasing of the sub-sample compared to the sample is due to the fact that removal of some data points at B because A did not detect them is the sole reason that some physicists think that something happened instantaneously between A and B. No such thing happened in nature, only in the maths and minds of the physicists. After explaining to the physicists that David was quite correct about conditional probabilities I was amazed to find a week later on David's web site a comment to the effect that “after some years of telling people about this, Ray Tomes is the first person to understand what I am saying”.

Caroline, who I visited in Wales a few years ago, has had a paper published in a peer review journal that shows the errors in many previously published papers. A number of these authors have acknowledged to her that she is correct. However further erroneous papers are continuing to be published and Caroline's further rebuttal of these is not published “because you have already published that”. Her protestation that the journals have continued to re-publish errors and that these need to be pointed out is ignored.

Neither David nor Caroline nor myself are “real physicists” but rather people that see the statistical errors in QM, but Trevor Marshall is a real physicist and he also considers that “Quantum Mechanics is not Science” to quote his web site. He goes on to ask “But didn't the Aspect experiment show Einstein was wrong?” and to reply “No, it didn't. The celebrated experiment of that name was done in 1981 and was a refinement of what Clauser and Freedman did in 1972. Neither of them established that locality is violated.”

The statistical error in the EPR experiments is a slightly more complicated one than the error in what is called “the collapse of the wave-function” which is a commonly described method in QM. Although it is commonly used it is quite possible to not use this concept, and the prestigious McGraw-Hill Encyclopaedia of Science and Technology does not even have the term “collapse of the wave-function” in it in spite of having two or three volumes of its twenty volumes devoted to QM.

Here is an example from everyday experience which makes clear the real meaning of “collapse of the wave-function” which is simply that our conditional probabilities have been updated.

Remember those games that they had in dairy's before the invention of space invader electronic games? There was one that you flicked a ball with a lever and tried to land in the win pockets rather than the lose ones. The ball entered at the top and bounced on a series of pins which deflected it either left or right at each point so that it usually did not end up at the extreme left or right which is where the win pockets were.

Now suppose that we do this in the dark. After the noise stops we turn on the lights and, if we do it enough times, we end up with a distribution like that shown in the upper diagram. But what if someone takes a flash photo on the way down? Does this alter the path of the ball? Well perhaps the light has a minuscule effect, but in essence it does not. However if we look at our photo then we can see the ball somewhere along the way as in the second picture. The probability distribution for the final position of the ball is now, as shown, quite different because of the new knowledge that we have.

Let us be quite clear about what this means. The taking of or looking at the photo did not cause anything weird to happen, but it did alter our perceived probability of where the ball would end up. This is not in any way related to us being conscious beings but it is related to the new information that we have. This is a parallel situation to the one called “collapse of the wave-function” in QM and equally mysterious (not).

The collapse of the wave-function is nothing more nor less than the updating of the information that we have about the possible location of a particle. It has absolutely no physical effect on the particle or anything else which continues to move under the laws of nature. All that changes is that we now know that there are some places where the particle might previously have been that have been eliminated as possibilities. Does anyone think that something mysterious happened here? I don't think so. Likewise for QM.

It is possible to describe QM without the collapse of the wave-function as already mentioned, and such description is much nearer to common everyday experience. However the EPR experiment has no such commonly accepted explanation. It is the “commonly accepted” part that is the problem. There are two statements about the EPR experiment that are commonly made and that are quite contradictory. These do not prove weirdness of nature, but weirdness of thinking.

Firstly it is admitted that information cannot be sent from A to B (which would be in violation of relativity through being faster than light) by doing the following experiment. This first statement is true. If information cannot be sent, then it follows that there cannot be an effect on B from the measurement at A.

Secondly it is claimed that two entangled (meaning produced together) particles (although the experiments have only ever been done with light) can be observed by some apparatus in a way whereby the particular form of the measurement done at A influences the outcome at B. This totally contradicts the first statement and is without doubt erroneous as will be explained.

How does such a state of affairs come about? Well the EPR experiment was designed for particles and then done with light instead. It is supposed to depend on the spin of particles which in the light experiments is the measurement of polarisation. In the light experiments each “photon” is either detected or not detected. We don't know when we don't detect a photon that passed by, only when we do. The detection means that the photon showed up after going through a polarising filter (the same thing as polarising sunglasses).

Anyone who has any interest in realistic models of nature would consider a sensible model to include allowance for the fact that only a portion of light passes through such a filter, and that the proportion depends on the real light polarisation angle relative to the angle of the filter. When the filters at A and B are at the same orientation then they tend to detect the same photons whereas when they are at right angles they tend to detect different photons from each other. However the assumption is made that the detected sample is representative of all photons, a clearly wrong assumption. All events where A detects a photon and B does not, or vice versa, are discarded from the analysis. This bias in selection accounts for the sine squared nature of the variation whereas it is claimed that only a sine relationship should exist for realistic models.

Von Neumann claimed to have proven that realistic models could not possibly account for the results. His proof has been subsequently accepted as being flawed. Aspect also begged the question of assuming that QM was true and then, mistakenly, his results are taken to show that therefore realistic models cannot be true. And still no-one can explain that if all these things are as QM physicists say they are, then how do they account for David Elm's very simple results that anyone can do with coin tossing, or Caroline's paper which offers a working realistic model that yields the same results?

In the mid 1990s I was introduced by an American email friend to some Russian researchers who studied fluctuations in biophysical, chemical, biological and nuclear decay reaction rates. I was fascinated by their research and they supplied me with some of their data which I then analysed and soon afterwards I made a trip to Russia to participate in several of their conferences and to hold a seminar on my own work in the Harmonics theory.

The researchers in the Biophysics laboratory of the Russian Academy of Sciences in Pushchino, south of Moscow, are headed by Simon Schnol. They continued and extended the Italian Piccardi's work on variations in the rate of certain chemical reactions into biological growth rates and then nuclear decay rates. They found that nuclear decay is not totally random but that certain similarities occur after specific time intervals such as 1 day, 1 lunar month and 1 year. These similarities appear when, for example, measurements taken at 60 intervals of 1 minute each are combined into a single histogram. The expected result is an approximately normal distribution which is what is found.

However when histograms made 1 hour apart are compared they are found to be more similar than those made say 15 hours apart. Also, those made 24 hours apart suddenly become more similar again. When these results were first obtained sceptical physicists suggested that it must be some environmental effect such as temperature or humidity affecting the apparatus. All known environmental variables were measured and controlled to eliminate them as possible causes, but the histograms continued to show similarities in adjacent hours and also after 1 day, 1 month and 1 year.

Further tests involved Comparing measurements taken at locations 1000 km apart and it was found that the histograms were similar. Eventually it was found that similar histograms were formed from biological and chemical tests as from the radioactive decay ones - that is, all these phenomena are correlated with each other.

My own analysis of the Russian radioactive decay data involved looking for shorter term cycles. I predicted that 3 and 6 minute cycles would be found because these relate to the light spacings of the inner planets and I believed that there are large standing waves of e/m of these periods present around the earth. I did indeed find such periods and when I visited Russia, Dr Udaltsova confirmed that these two periods were present throughout 11 years of data that she had examined using a similar method to mine.

When this is thought about deeply, then an entirely different view of the world to randomness in QM results. If some specific periods are present, and there are very many other known possible candidates on longer and shorter time frames then they are all likely to be present. In that case there may be nothing random in quantum events at all. Every event may be the result of the sum of all the various waves of e/m energy arriving at any particular place in the cosmos. This is the view that I now hold and I have seen no evidence that indicates otherwise, and many things that become much easier to understand as a result.

In this view, space is pervaded everywhere by e/m waves of all frequencies and travelling in all directions. The frequencies range from faster than 1024 Hz to slower than 10-18 Hz. The sum total of these waves is what makes everything - there is nothing else in existence. Some of these frequencies form stable spherical standing waves which we know as particles and various other long-lived formations. Some form borderline stable states which can lead to decay if a particular combination of waves hit them at any time. These semi-stable states may be known as electron orbitals which may change when an atom ionizes, or as chemical compound changes, or as unstable nuclear states with various half-lives. The half-life is then a measure of how easily the state is disrupted by the background waves of the universe.

The truth of this is attested to by the fact that certain unstable isotopes are known to decay in the presence of certain e/m frequencies.

A simple example of a bucket of water will now be used to demonstrate how this type of view may lead to a deeper understanding of probability in QM.

Drip Analogy of Light - (Written 16th December 1996 by Ray Tomes)

An analogy to photon behaviour which removes the mystery from QM.

Imagine a tap dripping into a full bucket of water so that waves form and cause drips to fall over the side. Here are some of the salient points about the wave and particle behaviour of the system. [Comparison to QM noted]

In falling drips [emitted photons] cause waves to travel [wave-function or e/m field] and result in out falling drips [absorbed photons] which are discrete events that are causally related ["collapse" of wave-function] to the emissions. This model is only 2D compared to a real 3D world and the vertical dimension has no significance (except that it affects the energy of the emitted wave).

1. Over any long period of time the number of drips falling in and out are the same because the bucket remains equally full. [Conservation of energy]

2. Each drip that falls out is due to sufficient energy arriving at one time at one point on the edge. Part of this energy is from an in falling drip that made a wave travel between the two events. [At the speed of light]

3. In addition to the direct wave arriving at an out falling drip there is a background of ripples left over from previous waves that bounced off the edges. This energy will have some characteristic distribution by frequency. [Zero Point Energy]

4. If inward drips are stopped the background energy very soon reaches a level which is not quite sufficient to cause additional drips out. [Zero Point Field]

5. Any one in falling drip [emitted photon] may result in 0, 1, 2 or more out falling drips [absorbed photons] but must average exactly 1 and as each drip falling out is an independent event [there is no "collapse" of the wave-function] the number of out falling drips related to 1 in falling drip is a poisson distribution of mean 1.

6. Each drip that falls out has taken energy from waves locally but does not affect other locations except by the propagation of this energy removal which happens at the wave propagation speed. [No non local effects]

7. Any location at which a drip falls out must have had sufficient energy arriving by convergence at exactly that place and time. [Back action]

8. Although each out falling drip can normally be traced to a particular in falling drip as a cause, only a small part of the energy actually comes from that other drip which really acts a the final straw in adding to the background energy which was just below the out falling drip threshold.

9. Therefore most of the energy and momentum of the out falling drip did not come from the in falling drip but will be related by the fact of nearly common frequency. [Uncertainty principle]

10. An exception to this will apply when the source and observation are sufficiently close in relation to the wavelength of the particular wave. In that case the uncertainty will be reduced. [Casimer effect???]

11. There is no sense in which the out falling drip *IS* the "same" drip that fell in even though there may be a causal connection between them. Each out falling is also related to many other in falling drips waves which have been multiply reflected. [No "photon" identity]

12. Although all drips in and out are of discrete amounts of energy [particle behaviour] there is no such thing as a travelling drip [photon in flight], only the wave nature of the surface [wave behaviour] which is continuous in its behaviour. [Maxwell's equations]

The key point about all this is that space is everywhere filled with waves of many frequencies travelling in many directions. We do not become aware of these waves unless they cause a change in a nearly stable configuration or in other words some reaction occurs. We can certainly say that any wave that travels right on through cannot have any effect and that any wave that is detected has not passed right on through but has had some energetic reaction.

What is the natural low state of this background energy or zero point field? The natural low state is that everywhere that there is matter and at every frequency there is almost enough energy to cause an interaction but usually not quite enough. In the bucket and drip analogy this means that the bucket is full. Any slight disturbance from a drip falling in will on average cause one drip to fall out.

When QM attempts to explain a photon being absorbed in your eye as a result of a photon being emitted by a light, it invokes the idea of collapse because that is the only way that it can explain the whole of the energy of that emitted photon arriving at a single place when the light was expanding outwards as a spherical shell. Well in this model, Maxwell's equations which are totally continuous, are still perfectly valid. The result of QM happens because somewhere the wave from the emitted photon combined with the background energy to cause an event that we know as photon absorption.

There is no such thing as a photon in flight. However all emission and absorption events must be quantised because they have to move from one essentially stable discrete energy state to another. The subtlety of this caused great consternation to physicists a century ago. They observed the quantised behaviour and yet regarded Maxwell's equations as having to be continuous. They did not know about ZPE at that time and by the time the concept came along the damage to common sense had been complete.

We cannot undo history, but we must make science logical again or it gets stuck in a blind alley. There is no good reason that realistic models of quantum events cannot be made. There is no good reason to believe in action at a distance. There is every reason to believe that nature operates on a cause and effect basis without bias and without God playing dice. There is every reason to believe that we can discover a lot more about nature when common sense views about quantum events prevail.

Next, let us look at some real world examples of fairly stable wave structures forming in a non-linear medium. These examples confirmed for me the possibility of a wave structure of matter.

Wave Particles: Oscillons, Cymatics - (written Feb-1997 by Ray Tomes)

Recently there have been reports of interesting wave structures which appear in vibrated trays of small balls or grains of sand. These have been named "Oscillons" and take on a life of there own once they form. They have been observed to attract each other and to form strings and various crystal like structures, either square or hexagonal.

In these discussions I have seen no mention of the work of Hans Jenny who has published under the title "Cymatics" interesting photographs of similar structures that appear in liquids when vibrated rapidly.

On February 17th 1997 my brother Dennis and I made some experiments to see if we could repeat either of these oscillon or cymatics types of waves. We used a normal speaker connected to a computer and turned so that the speaker faced upwards. On top of this we mounted a small dish into which we put shallow water. Then we simply played various frequencies at different volumes to see what happened. We also experimented with different waveforms by adding harmonics.

We had no success with sand in the dish (probably because the speaker didn't have enough power) and it had a nasty tendency to all pile up and climb over the side. However water worked well and we found that at frequencies around 250 to 400 Hz we had interesting formations appear in the centre of the surface of the water. At slightly lower frequencies the activity was around the edges. At about 50 Hz the entire surface was active.

When the sound volume was low simple concentric rings appeared in the circular dish of diameter 6.3 cm. As the volume was raised gradually the central rings became energetic and generally formed a pattern like a flower with petals and then became free from a fixed location. At this intermediate level it was as if a number of "particles" had formed and a phase transition had occurred from solid to liquid. The particles were free to move but maintained certain distances from each other. At still higher volumes the particles travelled about very rapidly and sometimes small jets of water were ejected vertically.

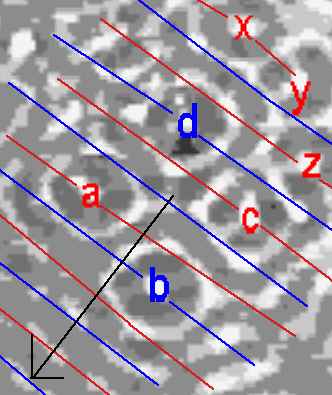

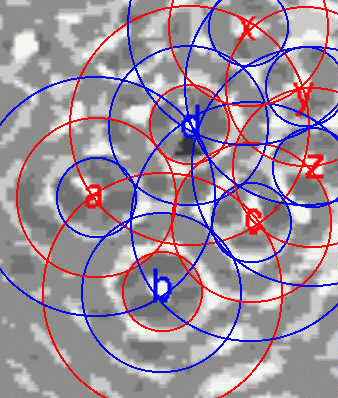

Picture of waves formed with 280 Hz vertical oscillation in a 6.3 cm circular dish. The particle like waves travel about independently when a medium strength oscillation is applied. The right figure is an expansion of the left and the particles at a, b, c, d have distances of 3/2 waves apart while x, y, z are only 2/2 apart. Therefore b and d are 180 degrees out of phase with the other particles labelled. So there are different "bond lengths" present. This is very suggestive that atomic particles are similarly formed as standing waves. Some non-linearity is necessary to have stability. In this case it is supplied by the different rate of acceleration applied to the water from above (by gravity) and below (by pressure). Surface tension applies in both directions.

A study of the distances between the labelled particles will give insights into how galaxy and stellar distances can be quantised. The distances are clearly quantised and also depend on the phase of the particles. The left diagram below shows how plane waves can agree in phase with multiple particles and this applies in multiple different directions. In 3D it is even more interesting. The right diagram shows the relationships between phase and distance and makes clear how each particle/wave supports each other one. These are exactly the types of structures that I had expected from the harmonics theory and I believe apply to galaxies and stars, atoms and particles.